Is AI Suitable for Large Scale Scraping?

With the hype around AI continuing to grow as new technologies are flooding onto the market, it can be tempting to assume that AI is the solution to most problems that you are hoping to solve, including web scraping. There are factors that should be considered when using AI to solve problems, and these should be used in your decision making process.

# What is AI?

Artificial intelligence is a blanket term that we have given to any application that can perform tasks that typically require human intelligence - for example, text generation and code development. A key benefit of this for web scraping is the ability to perform analysis on large amounts of data and recognise patterns in the data. In its current state, people often refer to large-language models and generative AI under the umbrella term "AI". ChatGPT, Gemini and Copilot are some of the more popular LLMs on the market at the moment.

These AI models are trained using a massive amount of data, including books, web pages, news articles, social media posts and code snippets. Though most developers of these models are quite tight-lipped about how much data is required for training, we can assume that the actual amount if into the tens of terabytes.

Currently, Axiom.ai offers two steps that allow you to integrate generative AI (GenAI) into your automations - the Extract data with ChatGPT and Generate Text with ChatGPT steps. This can help you analyse a large set of data that has been scraped from a web page, or help you generate text that you can use with your Interact steps. We plan on expanding this functionality in future updates.

The largest benefit that people are seeing with GenAI is the ability to generate content and code. This can help speed up content or code creation, but should be used sparingly to avoid over-reliance on it. With content creation, it's important to note that the model may not have all of the required context to assist you - for example, currently asking it to create the steps required to create an automation within Axiom.ai will give you about 75% of the right answer, however, it will "create" new steps that are not part of the tool and may cause additional confusion with users. Being more precise with your prompts can mitigate some of the issues.

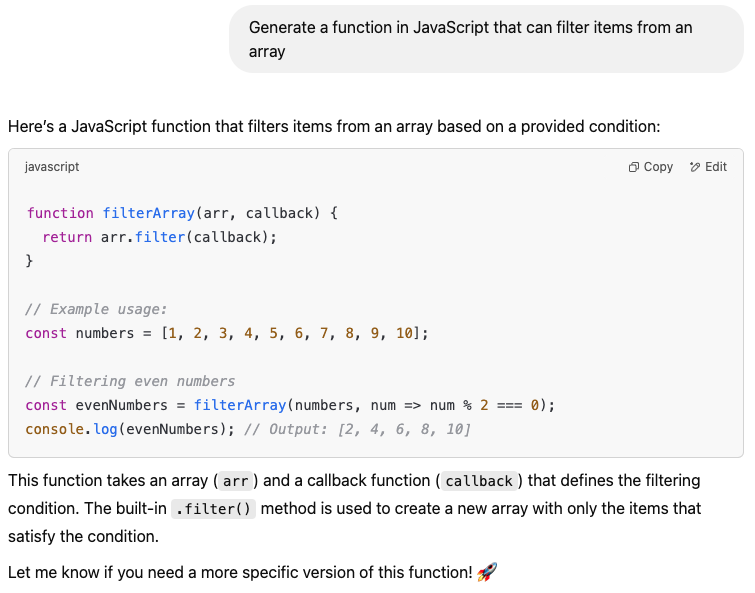

When it comes to using it for code generation, again we see a large number of benefits from GenAI - however, there are common pitfalls that catch newer developers. While it can speed up development, we are seeing a large number of developers adding code to their codebase that they may not fully understand. GenAI often will explain the code that it's written, but it's still critical that the developer understands the code that they are copying into a project - this is just like when a developer blindly copies code from StackOverflow! Blindly copying code into your project may get the job done, however, this will decrease the maintainability of your project. Instead, use it as a learning tool - ask the model to explain the code to you so you can learn how to implement it yourself, or at a minimum, so you can modify or add additional comments to the code.

Both content and code generation can provide benefits when it comes to large scale scraping, including being able to extract custom CSS selectors to use to ensure that you are targeting the correct elements on the page for efficiency. Let's dive into some other considerations.

# Cost

Using AI as an individual user tends to not be too expensive, however, if you decide to use this for large scale operations, such as scraping, you should consider the cost of using these services. Most services, such as OpenAI (ChatGPT) will offer an API that can be used and charged at a pay-as-you-go rate. "Tokens" are used to measure the data that you are sending to the API and are used to charge users. OpenAI states on their website:

You can think of tokens as pieces of words, where 1,000 tokens is about 750 words.

As of the time of writing, they charge $2.50/1m input tokens. Your input would include your prompt, along with all of the data that you are sending over.

Where this becomes expensive is operations like large scale scraping - if you decide to use AI for data manipulation, you may end up sending a lot of data tokens to the service when you are sending the data that you have scraped. For example, if you scrape the "contact us" page of a list of URLs and want to extract a list of email addresses you may end up sending a large amount of data inserted inside of your prompt. Recently, this would be considered the easiest and most reliable solution - that's not always the case.

Instead of sending all of the data in a prompt and using a large number of input tokens, consider doing the data manipulation using a short JavaScript script locally or exporting the data to a service such as Google Sheets. Check out our Manipulating data with regex snippets to learn more about doing this locally, or in your Axiom.ai automation. For our example above, we could use the "Extracting data" snippet:

// Let's use data scraped from a website, this contains the string "115 Records".

const data = '[scrape-data?all&0]';

// We just want the number of records here, let's extract it with regex.

const records = data.match(/\+d/)[0];

// Return this to be included in the 'code-data' data token.

return records;

# Environment

At its core, it is important to remember that AI models require a lot of resources to train and to query. When we ask AI a question, or ask it to perform a task, this will require computing resources in the data centres that the service are running these models on. In 2018, OpenAI researchers Dario Amodei and Danny Hernandez published an article stating that the computing requirements of training AI has been increasing at a 3.4 month doubling time (OpenAI (opens new window), 2018) - this is massive when compared to Moore's Law (opens new window) which has a 2-year doubling period.

Increased computing requirements is expected to continue as these models continue to get trained on an increasing number of parameters, and as "agents" start to be become more generally available. It's expected that emissions from the ICT sector as a whole "will reach 14% of the global emissions, with the majority of those emissions coming from the ICT infrastructure, particularly data centres and communication networks" (Earth.org (opens new window), 2023). This may be mitigated in the future with more efficient code, however, current trends would suggest that this is not going to be solved in the near future.

When considering using AI to power up your scraping workflows, you should consider this in addition to your organisations current carbon footprint as using AI in your workflows may significantly increase the carbon footprint of the task you are attempting to automate. An alternative may be to automate these tasks locally, such as running operations on your local PC using Axiom.ai, Automator, or Power Automate.

# Technical Challenges

Even if you are not a bot wandering through the internet you will have run into a CAPTCHA, or a bot-detection algorithm (such as Cloudflare) that are there to confirm that you are a human. While these are pretty simple to bypass as a human, they are effective at preventing bots from entering the site that they are protecting. This can stop your bot in it's tracks and prevent it from scraping content - on a large scale, this may cause a lot of lost time as it's not always obvious when the bot is stuck at one of these checkpoints. Features like Bypass Bot Detection in Axiom.ai, puppeteer-extra-plugin-stealth (opens new window), or another Puppeteer plugin can help you get around these limitations.

For large scale scraping, its also recommended to employ efficient proxy management - routing all traffic through a single IP address may lead to the IP address being blacklisted by certain websites and prevent your ability to access them without rotating your proxies. If you are in a large organisation, it may be possible to do this using your current infrastructure, if not, there are plenty of services that offer the ability to purchase residential IPs for a fee, depending on how much traffic you anticipate to be running through them.

With frameworks like React, Vue and Angular becoming popular it means that web content can load into a web page dynamically using JavaScript rather than the static pages we are used to. This means that there needs to be mechanisms implemented that can wait for pages to finish loading before continuing with the interaction. Headless browsers such as Puppeteer (opens new window) and Playwright (opens new window) can help you with this.

# Page interactions

More and more commonly, you will come across websites that do not initially reveal all of the data that is present on the page when it loads. The increased popularity of Javascript libraries such as React.js means that data can by dynamically added and removed from pages depending on user interactions with the pages - think of dropdowns, accordions or any other elements that require you to "click" to reveal information. Most AI agents, or web crawlers will only scrape data that is currently visible on a page and miss out on any data that is hidden on the page, or requires interaction for the page to be dynamically added. You can get around this by using a script, or tool like Axiom.ai (opens new window), to interact with the page prior to scraping to ensure that the data is present on the page, but this can add significant overhead when working on a large scale.

The same concept applies to page loading - when a page is initially loaded, it may decide to lazy load (opens new window) content to speed up the initial load of the page. This feature is popular with resource heavy files, such as images and video, where they are not loaded until the user scrolls to the part of the page that contains the resource. This can trip up some AI agents and web crawlers for the reasons that were mentioned above - they are not yet present on the page. You can get around this by scrolling the page prior to scraping data from the page, which can, again, add significant overhead when working on a large scale.

# Legal & Ethical Concerns

When building a large-scale scraper, it's essential to consider legal and ethical implications. Always review the terms of service of the websites you're scraping, as violating them could lead to account suspensions or even legal consequences if the target service takes action. In most cases, terms of service explicitly prohibit scraping.

Privacy laws should also be top of mind for larger operations - GDPR (EU) and CCPA (California) restrict automated data collection, especially for personal or sensitive data, while intellectual property laws and policies. Scraping and republishing certain types of data (e.g., news articles, research papers) can raise copyright concerns.

# Recommendation: Learn Prompt Engineering

Prompt engineering (opens new window) is an emerging field within AI, it's defined as:

The process of structuring or crafting an instruction in order to produce the best possible output from a generative artificial intelligence (AI) model.

To get the most out of GenAI, you really need to be specific with your instructions. Prompt engineering on the surface seems simple, however, remember that the prompt makes up the input tokens we discussed in the cost section of this article - you need to ensure that your prompt is as specific and as concise as possible to reduce the number of input tokens that your prompt will consume.

# Wrapping up

When used correctly AI has the power to provide efficiency boosts to your organisation when it comes to large-scale scrapers and general operations. It can help speed up development activities, such as writing code or content generation. However, there are certain things that you should take into consideration before committing to using AI for your large-scale scrapers, such as:

- The cost using AI - specifically surrounding API costs.

- The environmental impacts of training and using AI.

- The technical challenges - bot blocking, proxies, and dynamically loading content.

- The legal & ethical concerns surrounding scraping.

Finally, we would recommend learning prompt engineering to make sure that you are creating prompts that get you the results that meet your requirements, but also reduce the number of input tokens in order to reduce the API costs.

Have some thoughts you'd like to share? Drop a post over on our Reddit community (opens new window), we would love to hear from you!