How to scrape emails from a website (with chatGpt)

✉️ Scraping emails from websites can be challenging since they may not be present or in consistent locations on webpages. If you're looking to gain skills to automate and build bots with powerful 🧠 ➕ 🤖 AI capabilities, you've come to the right place. We'll show you how to create a bot that utilizes AI to scrape emails from websites.

This guide is for the new version of Axiom v4 🧨, which is coming soon. Email support if you’d like to try it. This guide applies to both versions of Axiom. If using the Axiom v3 use the interact step instead of the loop.

Looking for a ChatGPT web scraper try this ChatGPT web scraper template. (opens new window)

# Why would you want to scrape emails from websites?

To install this Gmail template click 'Install template'. If you are a new user click 'Install Chrome extension' you will be need to create a Free Axiom.ai account before you can edit the template.

# Why use ChatGPT?

No one webpage is the same, and this is especially true if you want to scrape thousands of websites for emails. Traditional scraper tools rely on CSS Selectors to locate specific content and so to scrape at scale, you’d need to set thousands of individual selectors or use complicated code. That's where AI comes in handy, capable of finding content regardless of structure. AI’s can go to any webpage and search for data such as email, dates, and URLs - you simply need to tell it what to look for.

# What exactly is a ChatGPT bot?

A ChatGPT web scraper bot 🧠 ➕ 🤖 combines browser automation technology and AI ChatGPT tech. It's like a robot, but for the browser

# How does the email scraper bot work?

The bot does the hard graft 🤖 🦾 - it's a digital version of you. This digital assistant works through a list of URLs from a Google Sheet, visits each page, and extracts the data you need using its AI capabilities. The bot then writes the scraped data (in this case email addresses) to another Google Sheet, leaving you with plenty of free time to sit back and enjoy a cup of coffee!

# Do I need my own ChatGPT API Key?

You probably already have one, but they are simple to get hold of if you don't. Just follow OpenAI's instructions.

# Is it legal to scrape thousands of websites for emails?

The short answer is that there’s no law or rule banning web scraping, but that doesn’t mean that you can scrape everything. Web scraping is completely legal if you scrape data that is publicly available online.

Certain types of data however are protected by international regulations, so be mindful of what you can and can’t scrape when it comes to personal data, intellectual property, or confidential data.

# How do you make an email scraper bot?

With Axiom's no-code bot builder, you can create bots for web scraping without having to code. Use a simple point and click interface to create as many bots as you want! If you follow the steps below, you'll have a working bot using ChatGPT to extract emails in no time.

# Let’s learn how to build a bot to scrape emails

We also have a template you can try.

# 1. Set up your Google Sheet

Create a new Google Sheet. You can do this in your Chrome browser by entering 'sheet.new' into the address bar. Don’t forget to name your sheet something like 'Websites to scrape’. Add two tabs; one named ‘URLs’ and another named ‘Data’.

# 2. Start from blank

To build your bot from scratch, click on 'Add first step’. This will open the step selector and you can start adding steps to your bot.

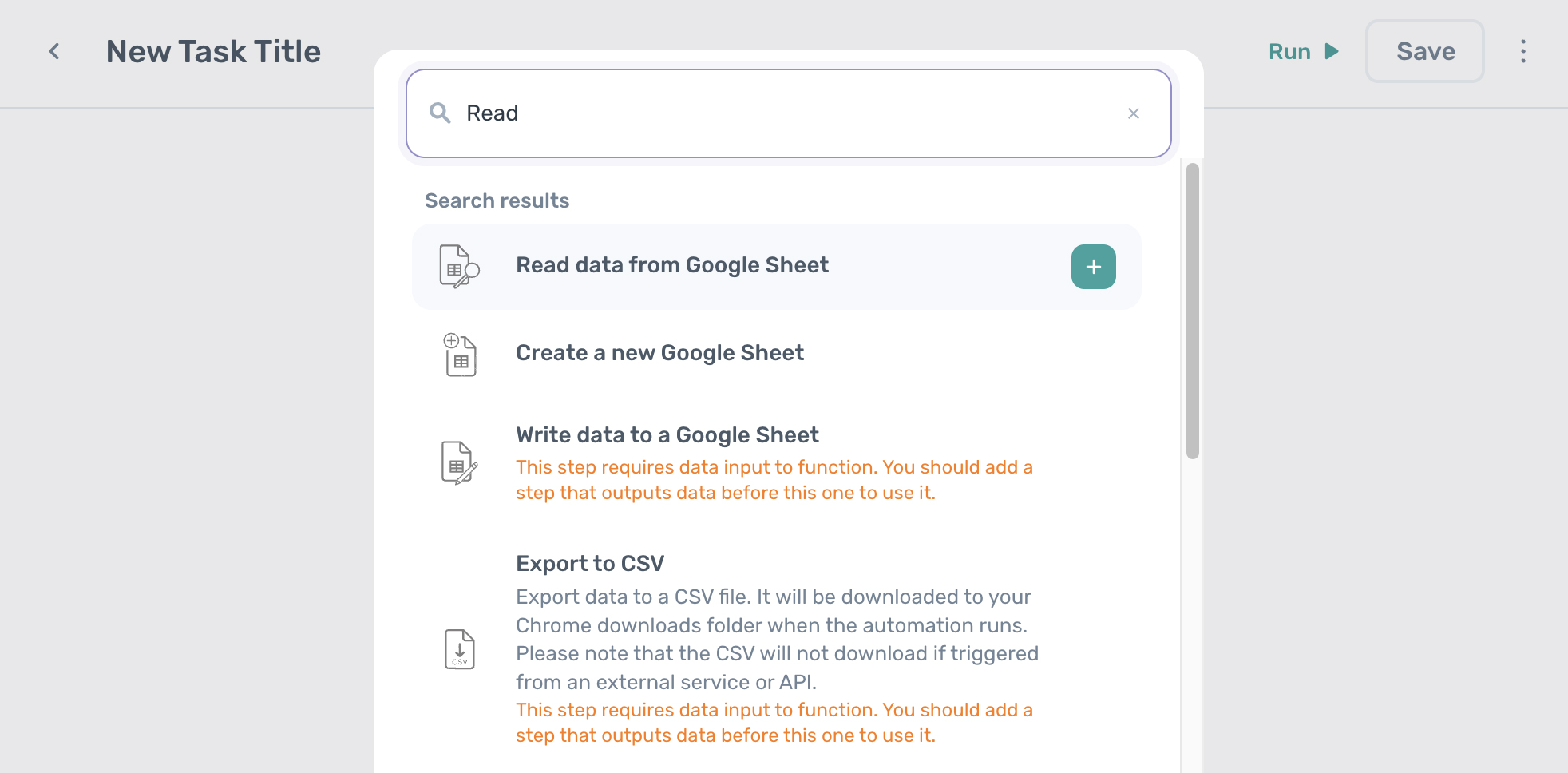

# 3. Add your first step: ‘Read data from a Google Sheet’

Use the Step Finder to search for ‘Read data from a Google Sheet’ and click on it. The step will be added to Axiom for you to configure.

In the field called 'Spreadsheet URL', you can search for the Google Sheet you created by name. Once found, click on it to select.

For 'Sheet name' click on the drop-down and select the correct tab.

In the 'First cell' field, toggle the switch and enter 'A1’. This setting tells the bot where to start reading data.

In the 'Last cell' field, click the toggle switch and enter 'A10'. You have limited the bot to read ten rows. This is fine for now, you can increase the amount later once you’ve tested it.

If you want to learn more about Google Sheet steps, watch these videos (opens new window).

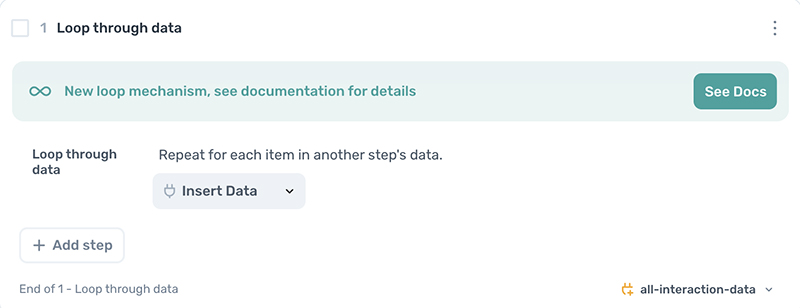

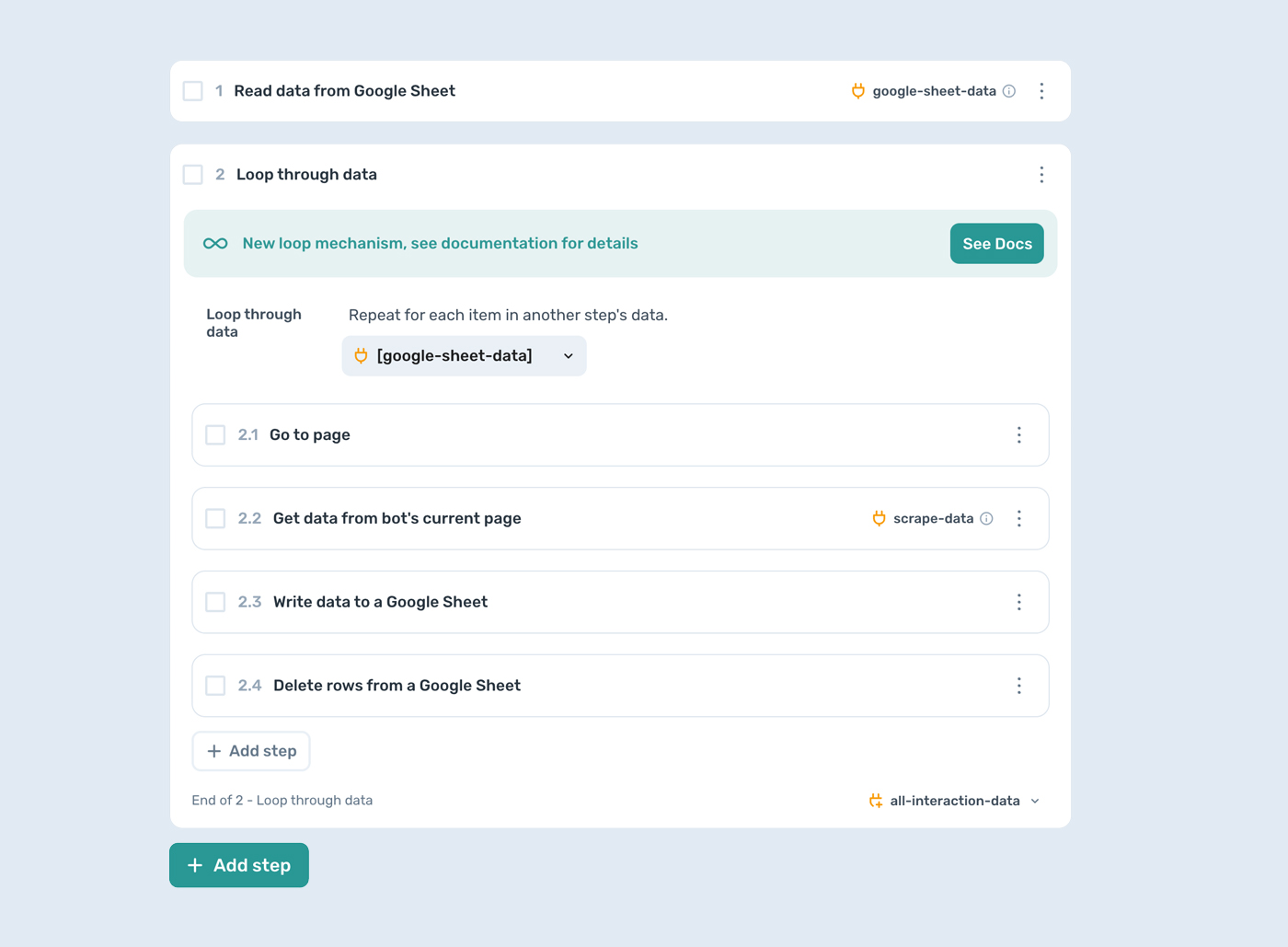

# 4. Add the ‘Loop through data’ step

Next, add a new step by entering ‘Loop through data’ into the Step Finder, and adding it. This step will allow your bot to loop through the rows of data stored in the Google Sheet. Make sure to add the steps you want to repeat inside the loop.

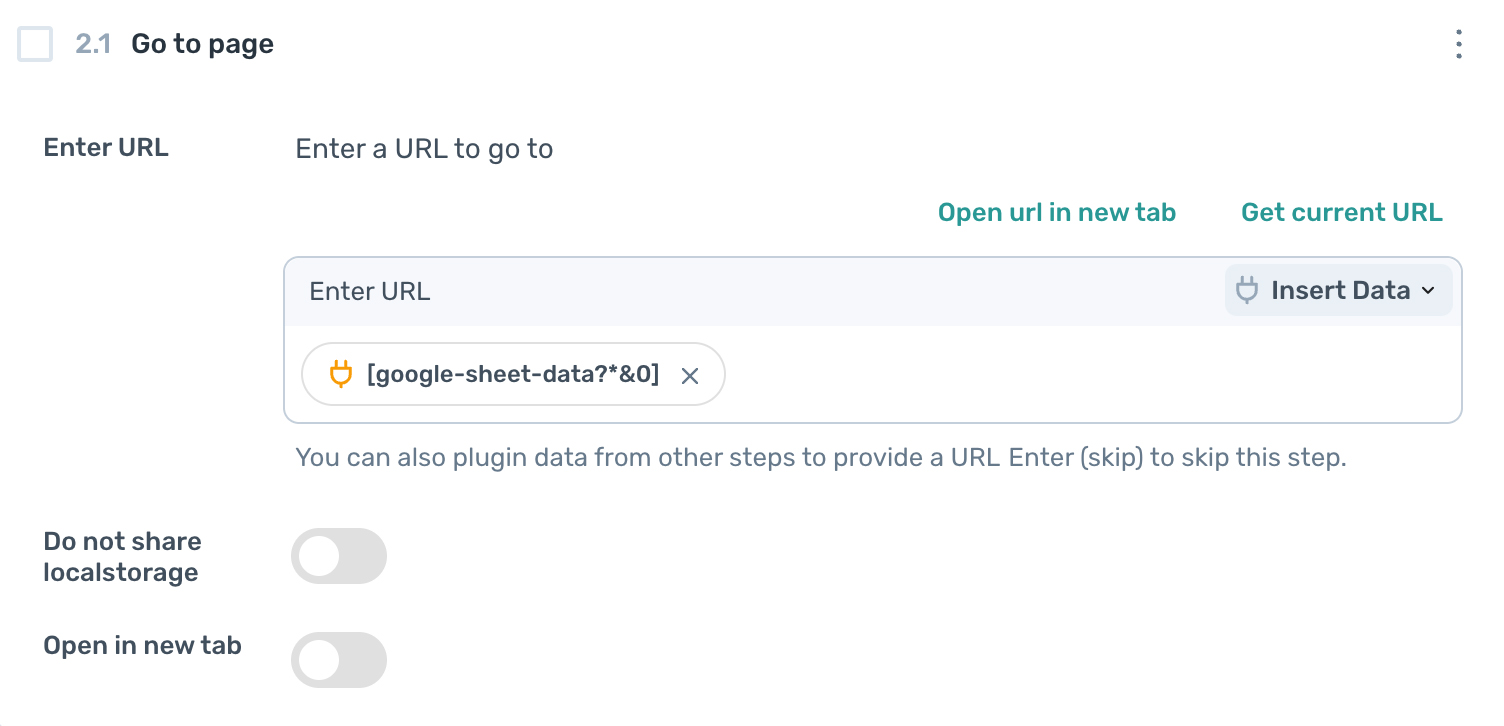

# 5. Add the ‘Go to url’ step inside the loop

Add the 'Go to page' step, in the 'URL’ field, select ‘Insert data’ and select the data from your Google Sheet, so that the bot knows which data to loop through.

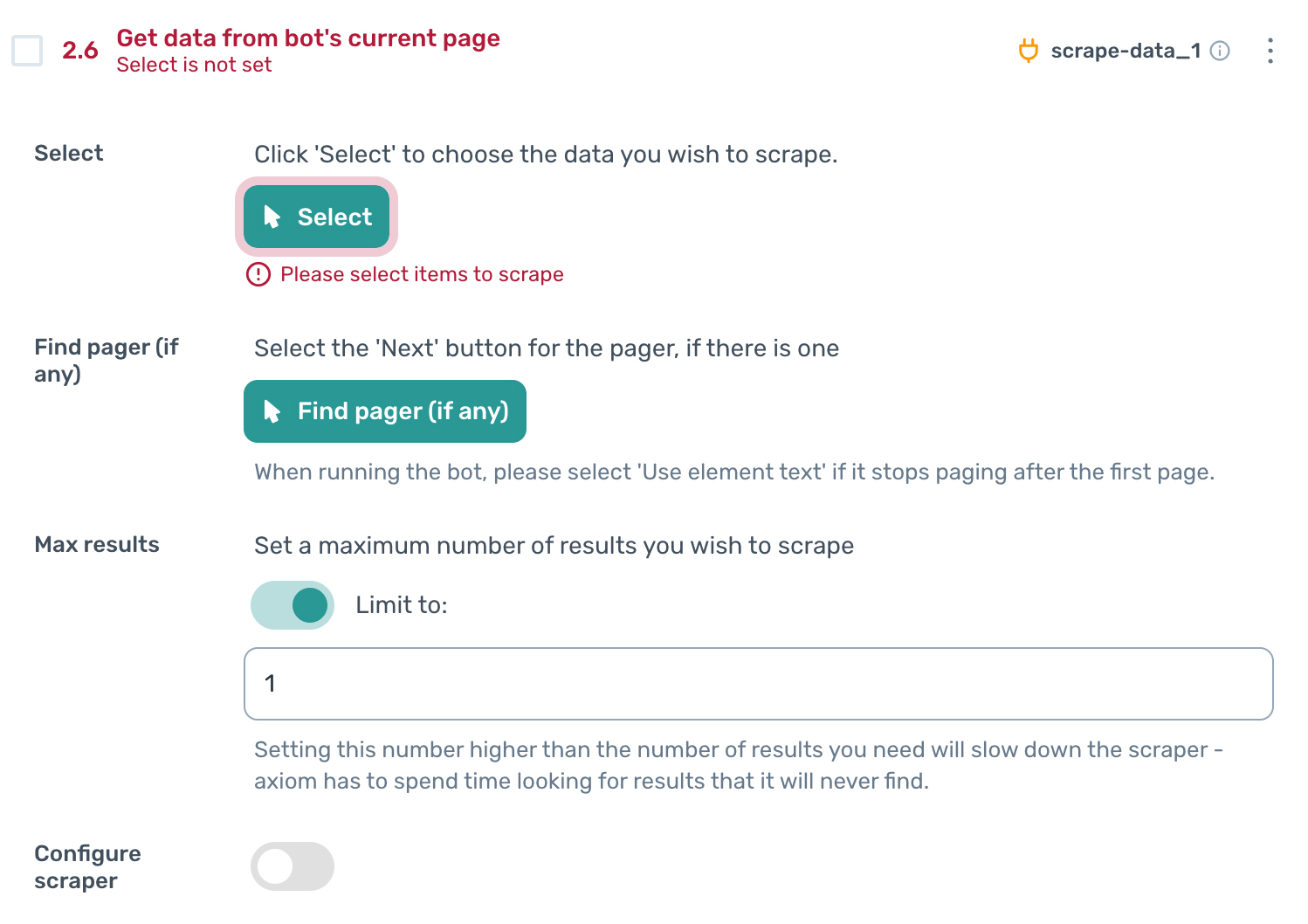

# 6. Add the ‘Get data from bot's current page’ step

Because we want to scrape many different webpages, we need to use a custom selector to scrape a common element found on every page. This common element will be ‘body’. Select it by clicking ‘Select’ and in column A, selecting ‘Custom selector’ and typing in the word ‘body’. Then click on ‘Confirm selector’ and then ‘Complete’.

Set the ‘Max results’ setting to ‘1’.

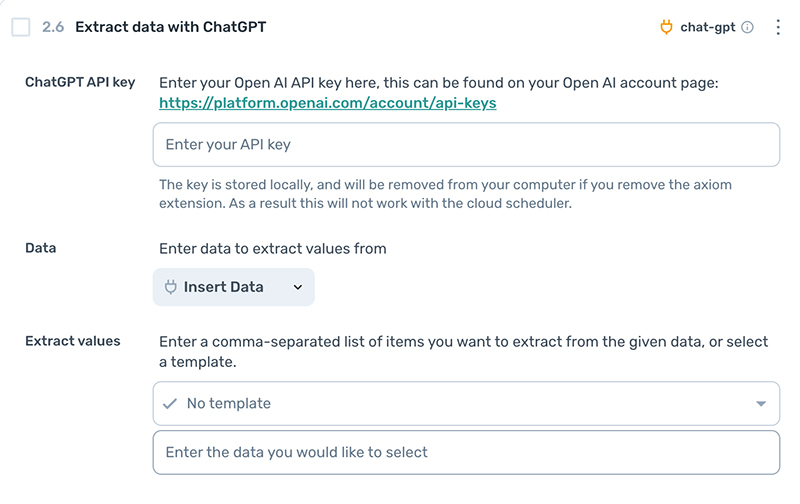

# 7. Add the ‘Extract data with ChatGPT’ step

You will need to enter your API key for this step to work, so make sure you have it to hand.

Then, in the Data field, set it to ‘Scrape data’.

Next, in the ‘Extract values’ field, tell the AI what data you want to extract, by entering a comma-separated list of items, for example email, address, dates, URL etc. You may want to experiment a little here.

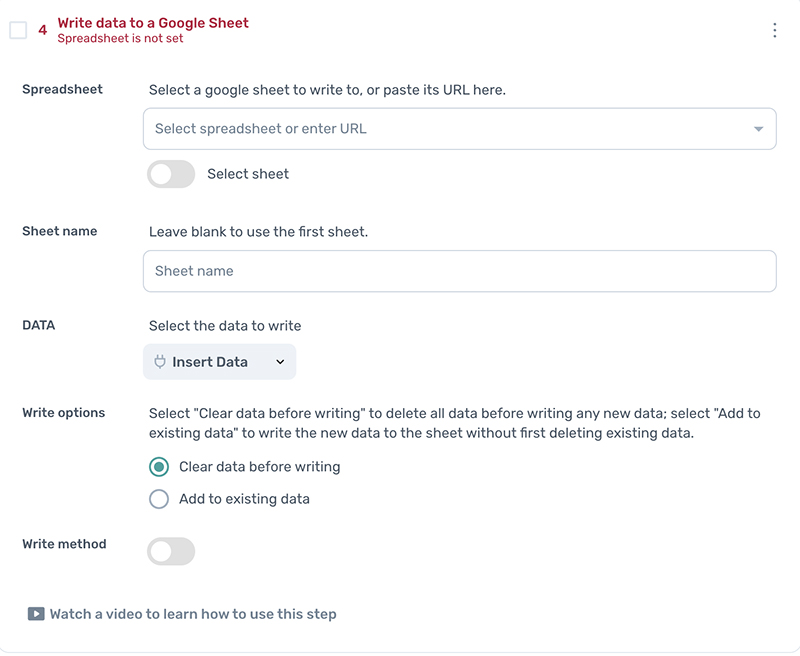

# 8. Write data to a Google Sheet

Now we’re going to write the data that ChatGPT AI extracted, to a Google Sheet.

Click 'Add step' in the loop and search for 'Write data to a Google Sheet'. In the 'Spreadsheet URL' field, search for the sheet you created at the beginning of this tutorial.

In the 'Sheet name' field, select 'Data'.

In the 'Data' field, select '[chat-gpt]'.

Finally, in the ‘Write options’ field, click the ‘Add to existing Data’ option.

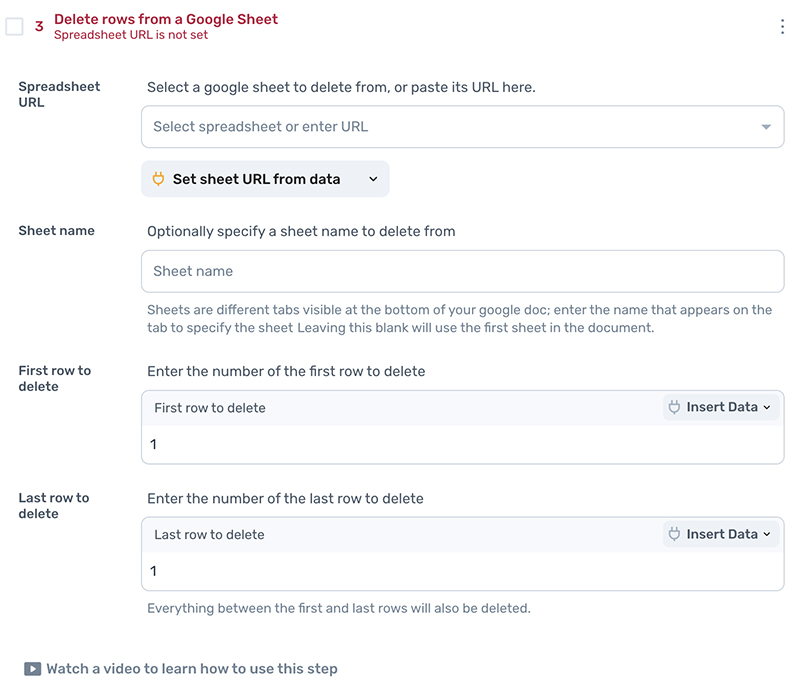

# 9. Within the loop step, add ‘Delete rows from a Google Sheet’ step

Add the step 'Delete rows from a Google Sheet' to delete the URL just scraped. This is to prevent the same row from being scraped repeatedly. Using the Step Finder, search for and select ‘Delete rows from a Google Sheet’.

In 'Spreadsheet URL' click to add the spreadsheet.

Then, in 'First row to delete' enter the number 1 and repeat this in 'Last row to delete', so that both are set to 1. We only want to delete one row per loop.

Set the ‘Sheet name’ to the original spreadsheet set up.

# 10. Ready to test

Now you’re ready to test with the desktop app, by clicking ‘Run w/dekstop app’.

When you’re done testing and you want to increase the size of your loop, edit the ‘Last cell’ field mentioned in step 3, and run again.

# 11. Running the bot

You can run this bot in the cloud and the desktop app. If you want to learn more about scheduling, click here..

# Design pattern for your bot

Your assembled ChatGPT bot should resemble this image.

# Issues you may encounter with your email scraper

- The bot only scrapes 10 rows, In the 'Read data from a Google Sheet' change the 'last cell' value

- ChatGPT returns no data, try adjusting your extract values

- No data or incorrect data being written to Google Sheets. Check the ChatGPT data has been inserted into the 'Write data to a Google Sheet' step

Don't forget we offer excellent customer support. If you need help, get in touch.

# Conclusion

Congratulations, 🥳👨🎓🥳, you've learned how to make and use a ChatGPT AI bot to extract data. With this newly acquired skill, you know how to scrape data, loop through actions, and extract data to a Google Sheet. The sky's the limit with your new AI super powers. 🦸

# What else can I automate with ChatGPT and Axiom.ai?

If you're excited, here are some ideas for other bots: extract data from your email app (such as Gmail), generate content, and send direct messages on Instagram. We have steps to extract data with AI and to generate text with AI. If you are looking to do an email blast on Gmail we have a gudie for that.

If you want to read more about browser automation and AI read this post.