Extracting content from a web page

# Get started with scraping

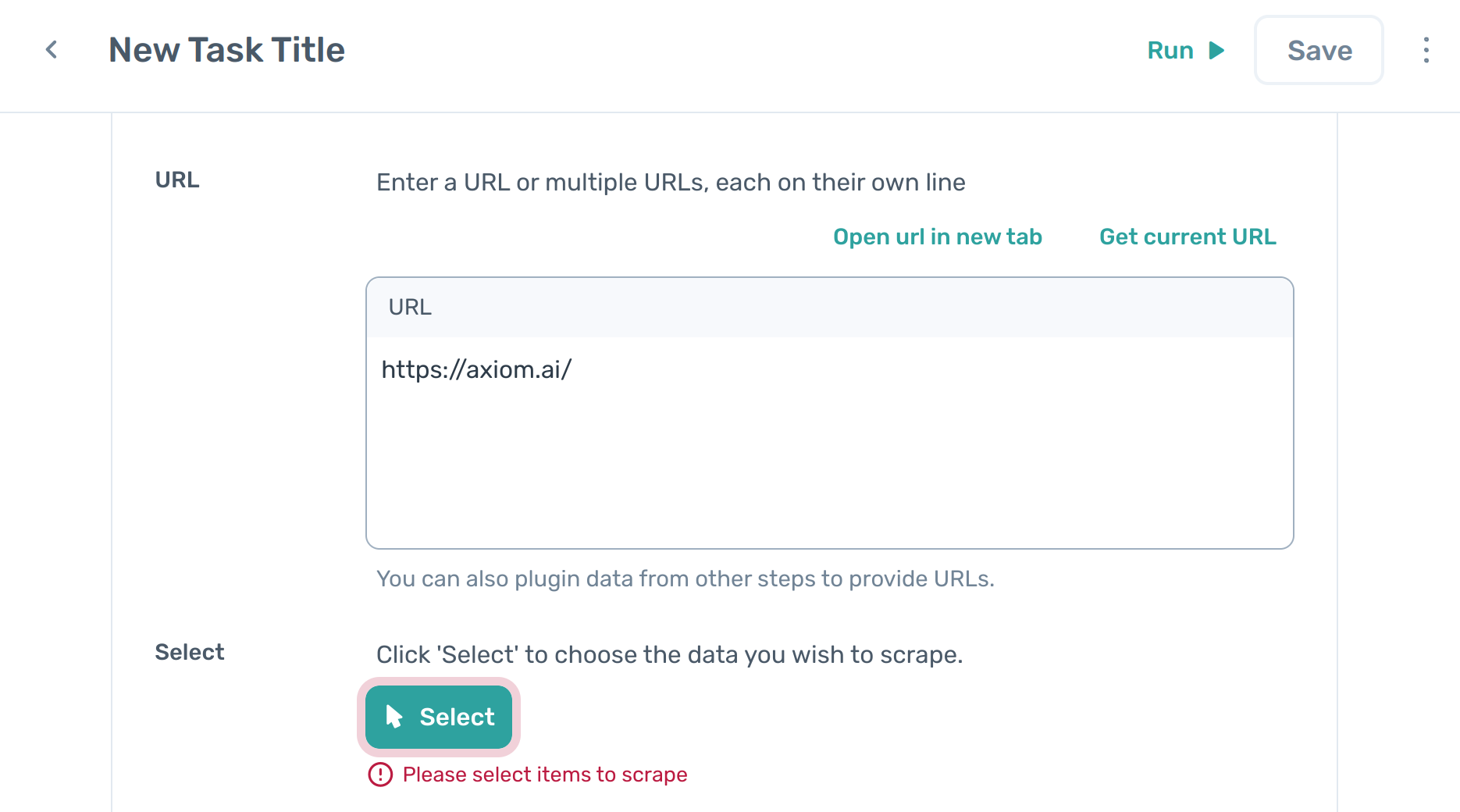

Starting off with data scraping, you'll want to use the Get data from a url step. Choose the step from the step listing. The step will auto-populate the current page as the default scrape target:

In the dashboard, click “New Automation”.

Click the “+Add first step”.

Click on "Scrape" then

Get data from a url.

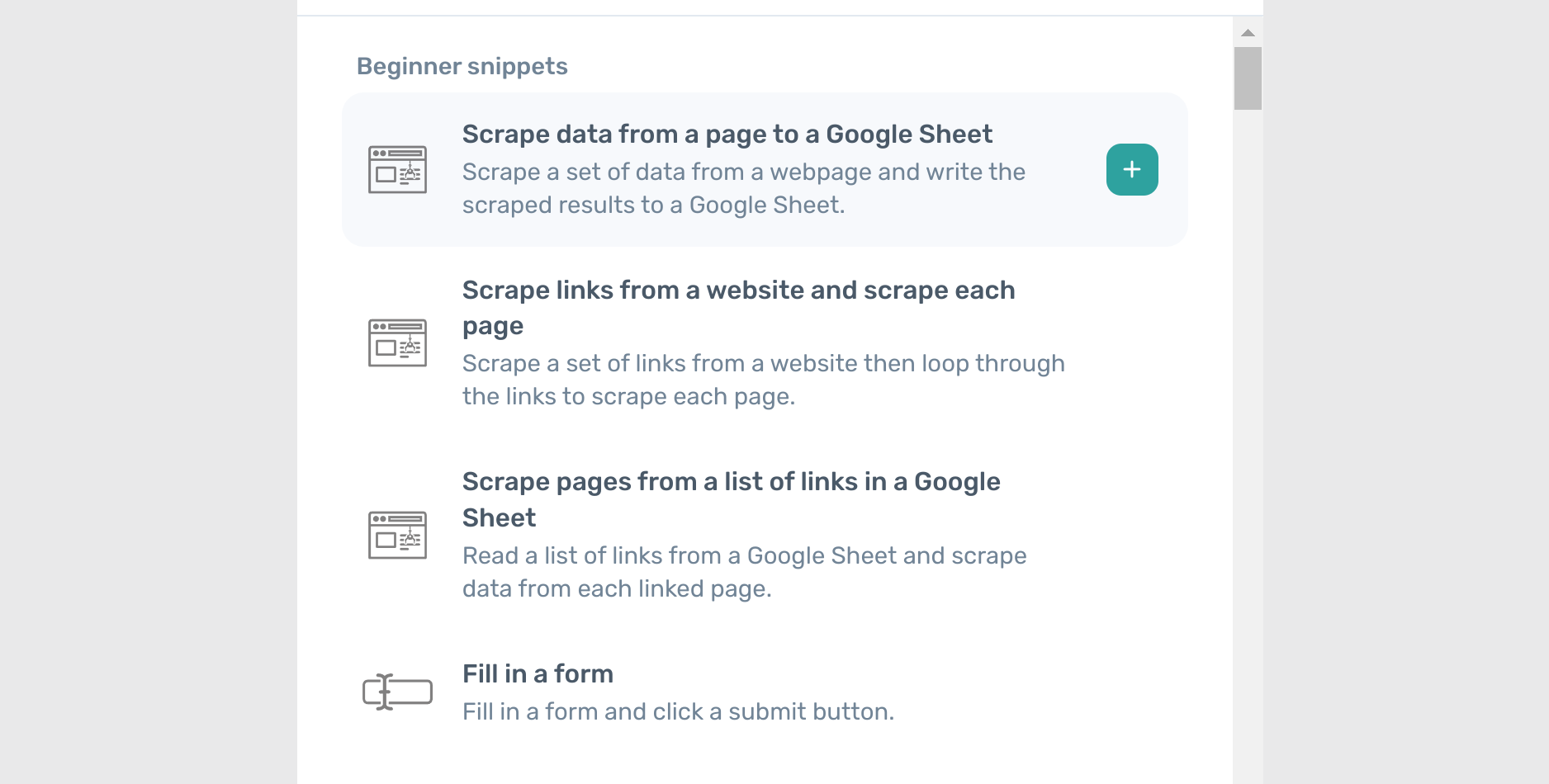

# Use beginner snippets

Beginner snippets are the most common combination of steps when getting started with axiom.

You can use snippets like Scrape data from a page to a Google Sheet and Scrape links from a website and scrape each page as a starting point for your scraping.

# Select data for scraping

Once the Get data from a url step has been added, you can choose what data to extract. To start this process, click the "Select" button. This will then open the selector tool and the scrape results preview table.

To select data for a column, click something you want to scrape on the page. Anything selected will be highlighted in orange, and will show up in the preview table at the bottom of the page:

If an element you would like to scrape is missing that you want to select, click on this too. The tool will automatically try to pick up a pattern in what you have clicked, and will populate similar elements. It usually only takes 2 or 3 clicks to get the pattern down, but sometimes it may take more. Keep selecting until you can see the data that you want highlighted in orange.

If the tool selects too much, you can also remove items from a column by clicking on them. This will exclude them from the column's data.

# Use the scrape results preview table

Once you have selected data, a preview of your selection will appear in a preview at the bottom of the page.

Axiom lets you add scraped data into a table structure, made up of rows and columns. The results of your current selections are displayed in the preview table.

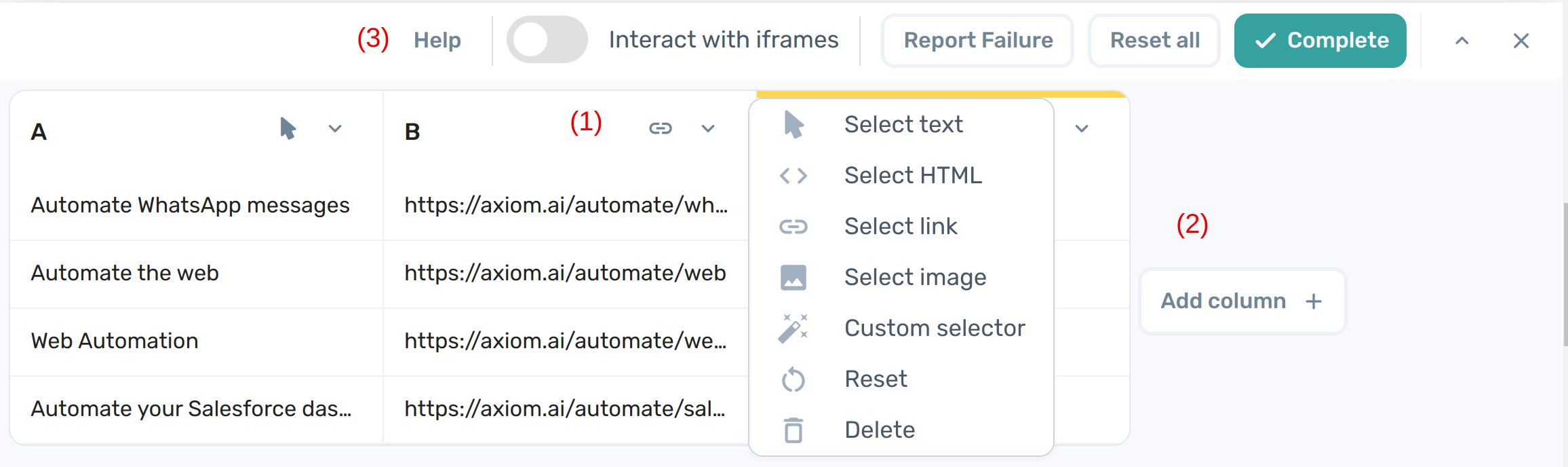

The preview table contains several settings:

- Arrow to access the dropdown menu to choose data type for scraping and additional actions:

- Select the data type of an element: Change data types for columns

- Custom selector - specify custom selector to scrape: Select with a custom selector

- Reset - reset the data to scrape, removing all data in this column, but leaving the column present

- Delete - remove the column from the dataset

- Add column - add a new column to the table: Add new columns of data

- Top menu with additional options:

- Help - open documentation

- Interact with iframes - Select elements within iframes

- Report failure - report an issue with the scraper for our tech team to investigate

- Reset all - clear all selections and columns so you can start again

- Complete - save all the selections and columns and redirects to the step builder

- Pin to the top - by default the panel preview is pinned at the bottom of the screen. Click the top arrow icon to pin the table to the top of the screen.

- Close table preview - Cancel the changes and Return to the step builder

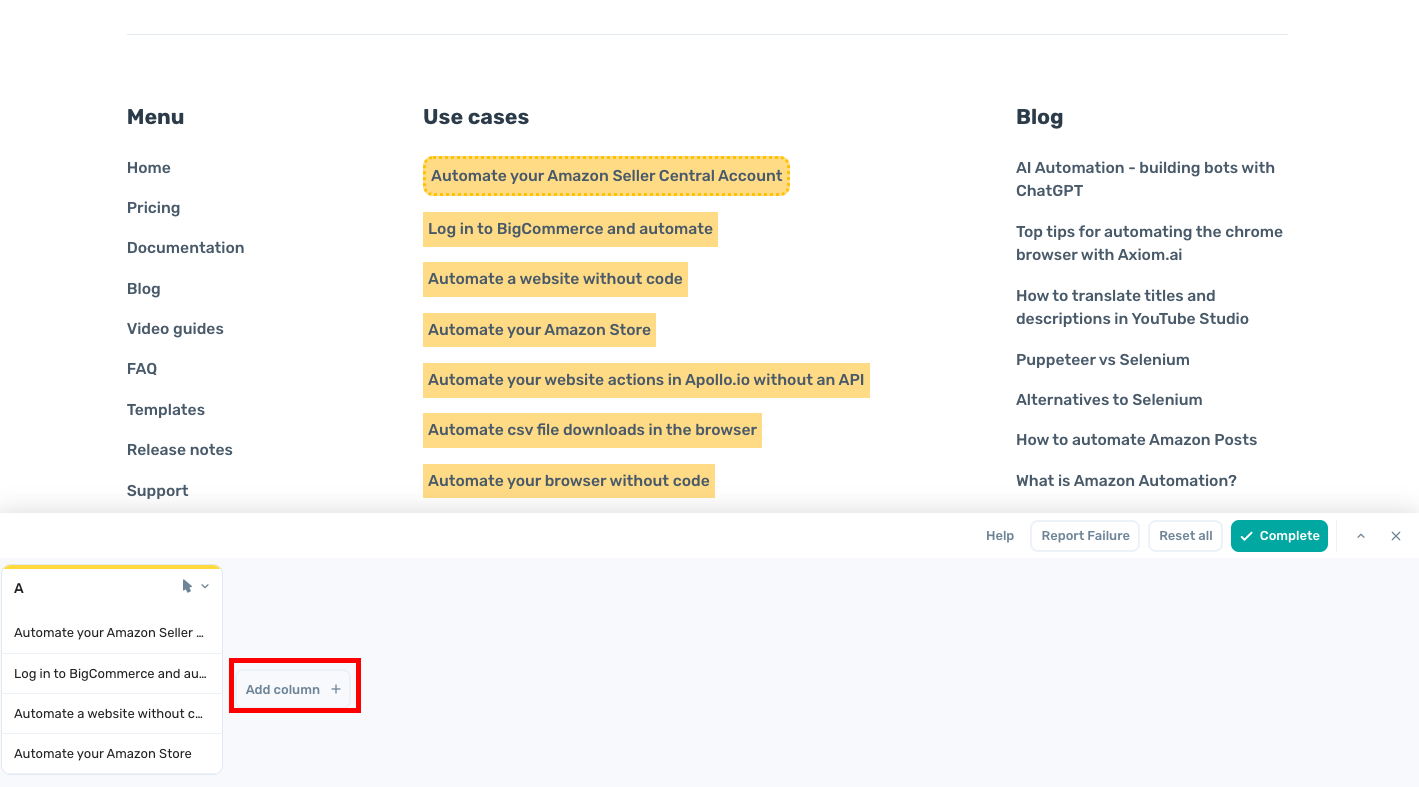

# Add new columns of data

Once you have one column set up, you can click the "Add column +" button on the preview table to add a new column. This lets you build out more complex data sets, consisting of multiple fields such as names, addresses, phone numbers etc.

NOTE: when selecting data that has more than one column and more than one row, Axiom will try and group the elements to keep the relationships between them; it will assume that the data you are scraping is a list of entries with a common format. For example, if column A contains names, and column B contains phone numbers, the tool will automatically try and match each name to the phone number it is associated to on the web page.

If the tool is unable to find a good grouping between the elements you have selected, you may get unexpected results. Make sure that, when you are choosing which elements to put into columns, that each column is related to the others in the data set.

# Change data types for each column

Each column can have its result type changed by clicking on the dropdown button in the header of any column. Each column of data can be given its own data type.

Currently you can select from the following options:

- Select text - scrape textual data

- Select HTML - scrape the HTML of an element

- Select link - extract the URL of an element

- Select image - extract the a link to an image file

You can switch between columns at any time by clicking on the column header.

# Set up a pager

A pager on a webpage is a navigational element that allows to move through multiple pages of content, typically found at the bottom of websites with extensive data, like search results, forum threads, or product listings. Examples include the "Next" and "Previous" buttons on a Google search results page, the numbered page links on an eBay listings page, or the arrow icons on a multi-page news article.

If the data you wish to scrape uses a pager, you can select this by clicking on the "Find pager (if any)” button and selecting the "Next" element that the scraper should click on to advance to the next step.

Note that you should not select the second page, as this will cause the bot to keep selecting the second page instead of cycling through all the pages as needed!

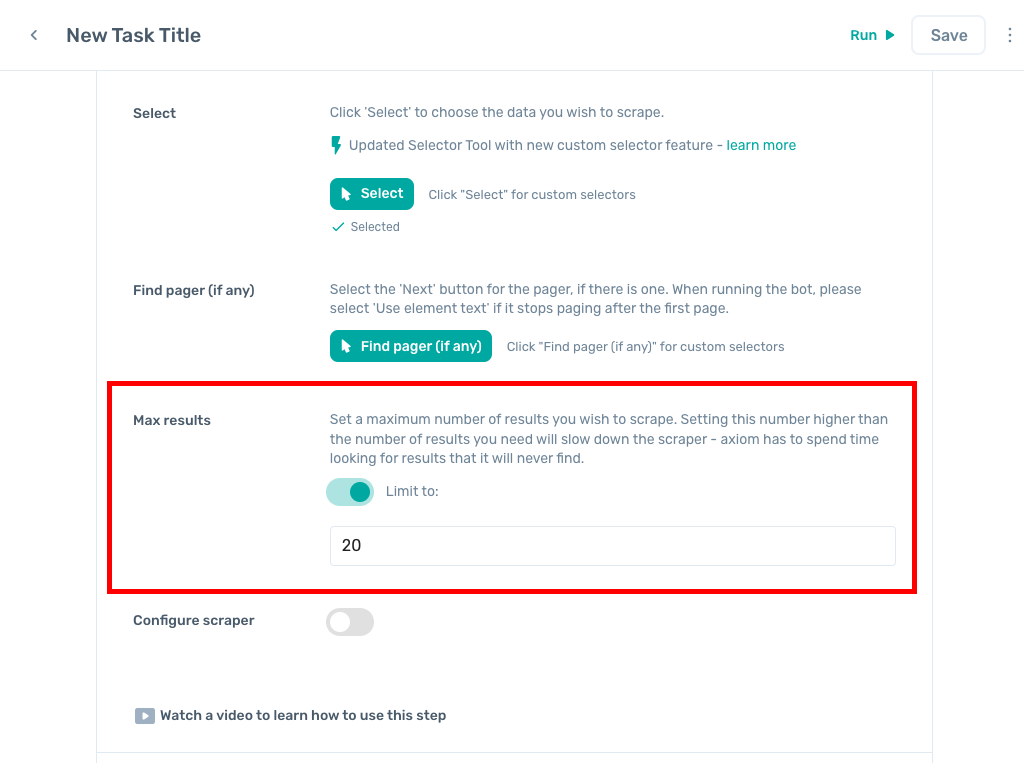

# Adjust the results limits in a scraper

By default, the scraper is set to a maximum of either 20 or 1 result, depending on the context in which the step was added. You can easily turn this off by clicking the toggle under "Max Results", which will allow all results to be selected:

A "result" here is a single row of data, so many columns can be selected and this will still only count as one result. For example, the following data scrape is one result, because it is one row long:

| A | B | C |

|---|---|---|

| James | Smith | mailto:jsmith@xxxx.com |

The advantage of setting a limited number of results is that it allows Axiom to process the information more quickly. If no upper limit is set, Axiom will wait for a while to see if anything new loads in before continuing. This is useful when testing your automation.

The "Max Results" field applies only to its scrape step, and not to the bot as a whole. This means that if you are only scraping a single record from a page, for best results you should set this to 1. A default of 1 is automatically set for scrapers set as a sub-step of an

Loop through datastep.

# Loop through pages and scrape data

Commonly, you might want to perform a set of steps on a series of pages, rather than just one. Some examples might going through a list of accounts in a CRM and updating account details, or going through a series of product pages and retrieving product information.

Axiom provides two beginner snippets to get started, depending on whether your list of pages is retrieved from a listing page, or from an existing data source, like a Google Sheet.

# Scrape links from a website and scrape each page

In the first step, scrape the links to the pages you want to visit. This step works exactly as described in Scrape a page. You usually can find these links on an app's search or listing page.

Secondly, the Loop through data step will visit these links. You can perform any actions you like on each page by adding new sub-steps to the Loop through data step

# Scrape pages from a list of links in a Google Sheet

In this snippet, you can provide axiom with a list of links to visit using a Google Sheet. Again, you can perform any actions you like on each page by adding new sub-steps to the Loop through data step

# Perform actions in loops

Click on the "Add step" button, and choose the appropriate action. For example, you might scrape further data, as described in Scrape a page, or automate some UI interactions, such as clicks, as described in Automate the UI.